Instructor Planning Guide

Activities

What activities are associated with this chapter?

Assessment

Students should complete Chapter 6, “Assessment” after completing Chapter 6.

Quizzes, labs, Packet Tracers and other activities can be used to informally assess student progress.

Sections & Objectives

6.1 QoS Overview

Explain the purpose and characteristics of QoS.

Explain how network transmission characteristics impact quality.

Describe minimum network requirements for voice, video, and data traffic.

Describe the queuing algorithms used by networking devices.

6.2 QoS Mechanisms

Explain how networking devices implement QoS.

Describe the different QoS models.

Explain how QoS uses mechanisms to ensure transmission quality.

Chapter 6: Quality of Service

6.1 – QoS Overview

6.1.1 – Network Transmission Quality

6.1.1.1 – Video Tutorial – The Purpose of QoS

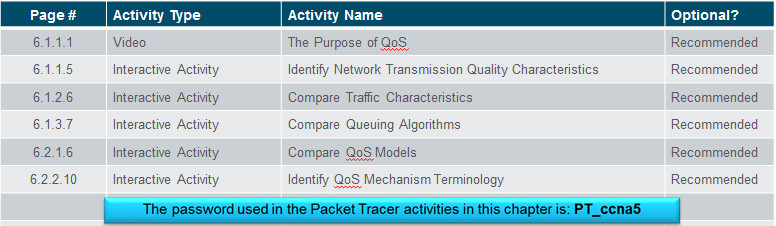

QoS or Quality of Service, allows the network administrator to prioritize certain types of traffic over others.

Video traffic and voice traffic require greater resources, such as bandwidth, from the network than other types of traffic.

Financial transactions are time sensitive and have greater needs than an FTP transfer or web traffic (HTTP).

Packets are buffered at the router and three priority queues have been established:

- High Priority Queue

- Medium Priority Queue

- Low Priority Queue

6.1.1.2 – Prioritizing Traffic

QoS is an ever increasing requirement of networks today thanks to new applications available to users such as voice and live video transmissions which create higher expectations for quality delivery.

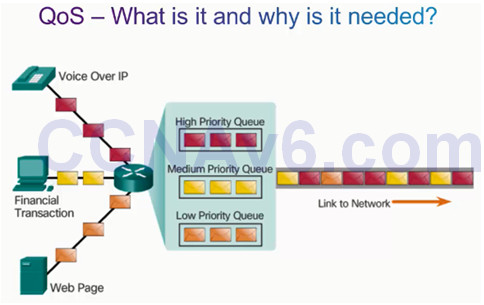

Congestion occurs when multiple communication lines aggregate onto a single device, such as a router, and then much of that data is placed on fewer outbound interfaces or onto a slower interface.

When the volume of traffic is greater than what can be transported across the network, devices queue, or hold, the packets in memory until resources become available to transmit them.

Queuing packets causes delay because new packets cannot be transmitted until previous packets have been processed.

Packets will be dropped when memory fills up.

One QoS technique that can help with this problem is to classify data into multiple queues as shown in the figure to the left.

It is important to note that a device should implement QoS only when it is experiencing congestion.

6.1.1.3 – Bandwidth, Congestion, Delay, and Jitter

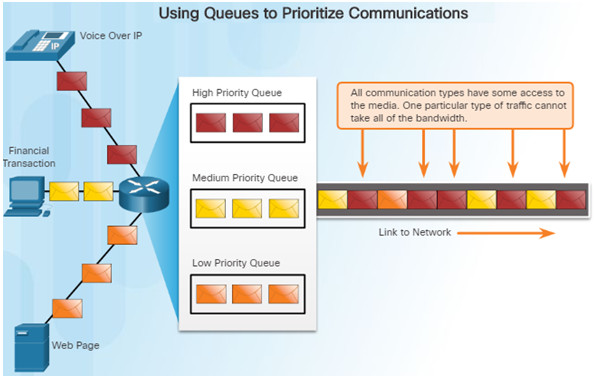

Network bandwidth is measured in the number of bits that can be transmitted in one second (bps).

Network congestion causes delay. An interface experiences congestion when it is presented with more traffic than it can handle.

Delay or latency refers to the time it takes for a packet to travel from the source to the destination.

- Fixed delay

- Variable delay

Jitter is the variation in delay of received packets.

6.1.1.4 – Packet Loss

Without any QoS mechanisms in place, packets are processed in the order in which they are received.

- When congestion occurs, network devices will drop packets.

- This includes time-sensitive video and audio packets.

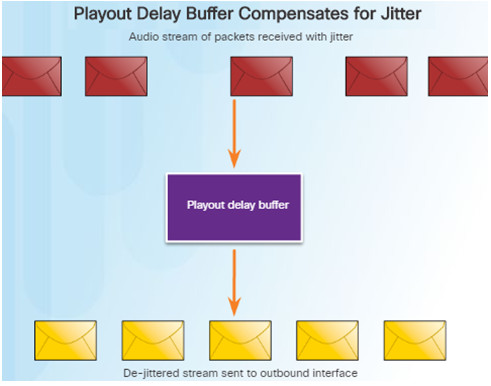

For example, when a router receives a digital audio stream for VoIP, it must compensate for the jitter that is encountered.

- The mechanism that handles this function is the playout delay buffer.

- The playout delay buffer must buffer these packets and then play them out in a steady stream.

- The digital packets are later converted back to an analog audio stream.

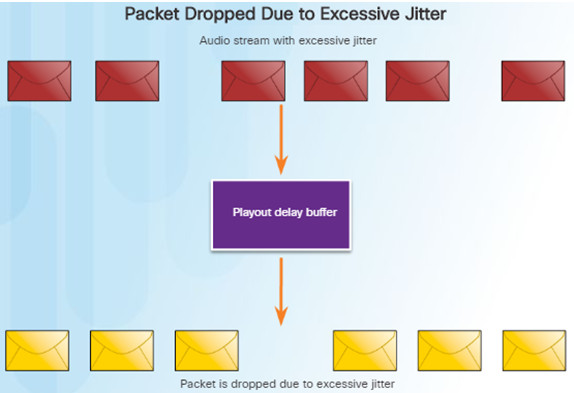

If the jitter is so large that it causes packets to be received out of the range of this buffer, the out-of-range packets are discarded and dropouts are heard in the audio.

For losses as small as one packet, the digital signal processor (DSP) interpolates what it thinks the audio should be and no problem is audible to the user.

However, when jitter exceeds what the DSP can handle, audio problems are heard.

In a properly designed network, voice packet loss should be zero

Network engineers use QoS mechanisms to classify voice packets for zero packet loss.

6.1.2 – Traffic Characteristics

6.1.2.1 – Video Tutorial – Traffic Characteristics

Voice and video traffic place a greater demand on the network and are two of the main reasons for QoS.

There are some differences between voice and video:

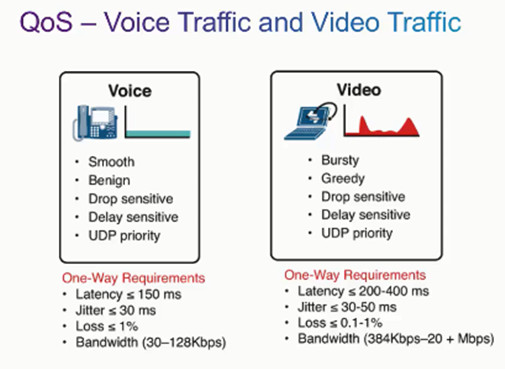

- Voice packets do not consume a lot of resources because they are not very large and they are fairly steady. Voice traffic requires at least 30 kilobits per second of bandwidth with no more than 1% packet loss.

- Video traffic is more demanding. The packets are more bursty and greedy. It consumes a lot more resources. Video traffic requires at least 384 kilobits per second in bandwidth with no more than .1 to 1% packet loss.

6.1.2.2 – Network Traffic Trends

In the early 2000s, the predominant types of IP traffic were voice and data.

Voice traffic has a predictable bandwidth need and known packet arrival times.

Data traffic is not real-time and has an unpredictable bandwidth need.

More recently, video traffic has become increasingly important to business communications and operations.

According to the Cisco Visual Networking Index (VNI), video traffic represented 67% of all traffic in 2014. By 2019, video will represent 80% of all traffic.

The type of demands that voice, video, and data traffic place on the network are very different.

6.1.2.3 – Voice

Voice traffic is predictable and smooth.

However, voice traffic is very sensitive to delay and dropped packets; there is no reason to retransmit voice if packets are lost.

Voice packets must receive a higher priority than other types of traffic.

Cisco products use the RTP port range 16384 to 32767 to prioritize voice traffic.

Voice can tolerate a certain amount of latency, jitter, and loss without any noticeable effects.

Latency should be no more than 150 ms.

Jitter should be no more than 30 ms.

Voice packet loss should not exceed 1%.

6.1.2.4 – Video

Without QoS and a significant amount of extra bandwidth capacity, video quality typically degrades.

The picture appears blurry, jagged, or in slow motion. The audio portion may become unsynchronized with the video.

Video Traffic Characteristics:

- Video – Bursty, greedy, drop sensitive, delay sensitive, UDP priority

- One-Way Requirements:

- Latency <= 200 – 400 ms

- Jitter <= 30 – 50ms

- Loss <= 0.1 – 1%

- Bandwidth (384 Kb/s – 20+ Mb/s)

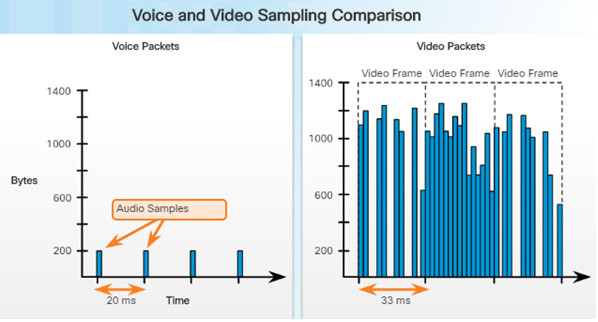

Compared to voice, video is less resilient to loss and has a higher volume of data per packet as shown above.

- Notice how voice packets arrive every 20 ms and are 200 bytes.

- In contrast, the number and size of video packets varies every 33 ms based on the content of the video.

6.1.2.5 – Data

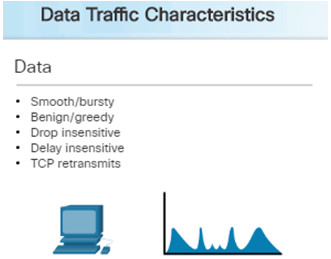

Most applications use either TCP or UDP. Unlike UDP, TCP performs error recovery.

Data applications that have no tolerance for data loss, such as email and web pages, use TCP to ensure packets will be resent in the event they are lost.

Some TCP applications, such as FTP, can be very greedy, consuming a large portion of network capacity.

Although data traffic is relatively insensitive to drops and delays compared to voice and video, a network administrator still needs to consider the quality of the user experience.

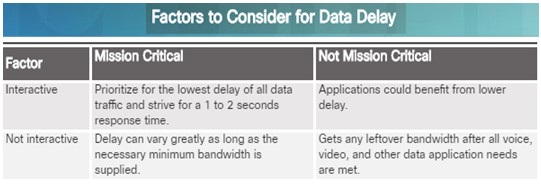

Two factors that need to be determined:

- Does the data come from an interactive application?

- Is the data mission critical?

6.1.3 – Queuing Algorithms

6.1.3.1 – Video Tutorial – QoS Algorithms

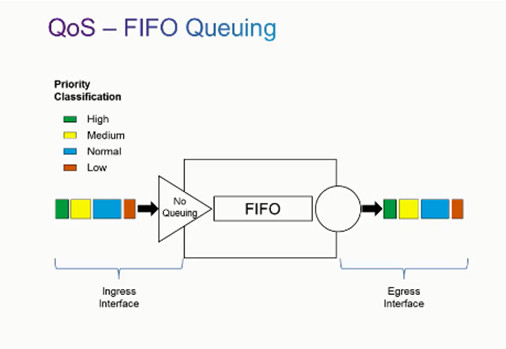

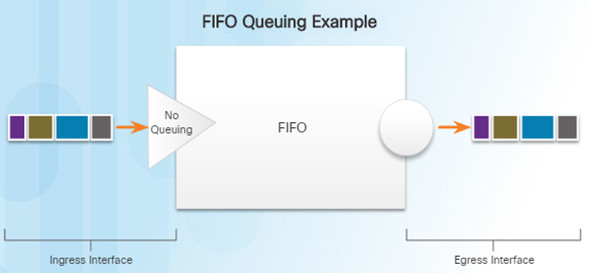

If we look at the queuing strategies for QoS, FIFO Queuing or First in First Out Queuing, is basically the absence of QoS.

Packets that enter the router will leave the router in the same order.

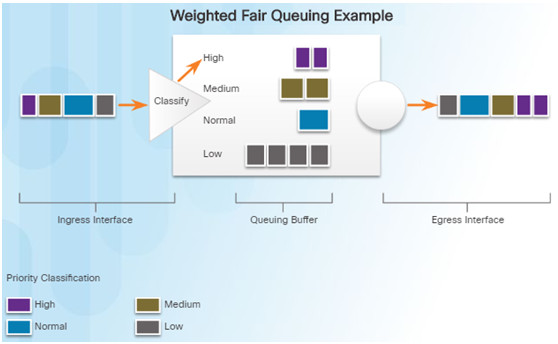

Compare this with Weighted Fair Queuing or WFQ and packets that come into a router are then classified and prioritized based on the classification.

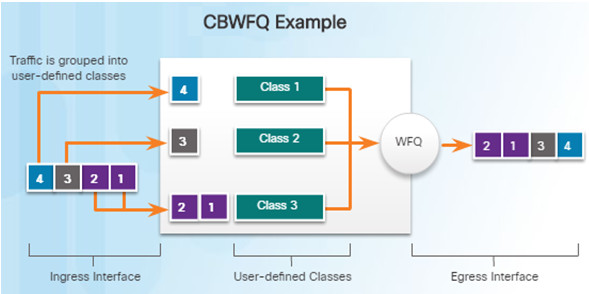

A newer form of Weighted Fair Queuing is Class Based Weighted Fair Queuing.

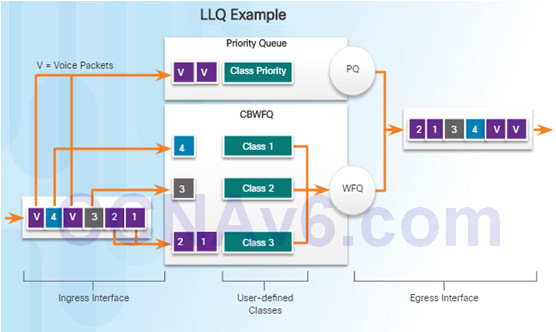

In order to guarantee that voice traffic is prioritized to the point there are no drops, Low-Latency Queuing can be used with CBWFQ to prioritize voice packets above all else.

6.1.3.2 – Queuing Overview

The QoS policy implemented by the network administrator becomes active when congestion occurs on the link.

Queuing is a congestion management tool that can buffer, prioritize, and if required, reorder packets before being transmitted to the destination.

This course will focus on the following queuing algorithms:

- First-In, First-Out (FIFO)

- Weighted Fair Queuing (WFQ)

- Class-Based Weighted Fair Queuing (CBWFQ)

- Low Latency Queuing (LLQ)

6.1.3.3 – First In First Out (FIFO)

FIFO queuing, also known as first-come, first-served queuing, involves buffering and forwarding of packets in the order of arrival.

FIFO has no concept of priority or classes of traffic and consequently, makes no decision about packet priority.

There is one queue and all packets are treated equally.

When FIFO is used, important or time-sensitive traffic can be dropped when congestion occurs on the router or switch interface.

When no other queuing strategies are configured, FIFO is used on serial interfaces at E1 (2.048 Mbps) and below.

FIFO is effective for large links that have little delay and minimal congestion

If your link has very little congestion, FIFO queuing may be the only queuing you need to use.

6.1.3.4 – Weighted Fair Queuing (WFQ)

WFQ is an automated scheduling method that provides fair bandwidth allocation to all network traffic.

WFQ applies priority, or weights, to identified traffic and classifies it into conversations or flows.

WFQ then determines how much bandwidth each flow is allowed relative to other flows.

WFQ schedules interactive traffic to the front of a queue to reduce response time. It then shares the remaining bandwidth among high-bandwidth flows.

WFQ classifies traffic into different flows based on packet header addressing, including source/destination IP addresses, MAC addresses, port numbers, protocols, and type of service (ToS) values.

6.1.3.5 – Class-Based Weighted Fair Queuing (CBWFQ)

CBWFQ extends the standard WFQ functionality to provide support for user-defined traffic classes.

You define traffic classes based on match criteria including protocols, ACLs, and input interfaces.

When a class has been defined according to its match criteria, you can assign it characteristics.

- To characterize a class, you assign it bandwidth, weight, and maximum packet limit.

- The bandwidth assigned to a class is the guaranteed bandwidth delivered to the class during congestion.

Packets that match the criteria for a class constitute the traffic for that class. A FIFO queue is reserved for each class, and traffic belonging to a class is directed to the queue.

6.1.3.6 – Low Latency Queuing (LLQ)

The LLQ feature brings strict priority queuing (PQ) to CBWFQ which reduces jitter in voice conversations. See the figure to the left.

Strict PQ allows delay-sensitive data such as voice to be sent before packets in other queues.

Without LLQ, CBWFQ provides WFQ based on defined classes with no strict priority queue available for real-time traffic.

- All packets are serviced fairly based on weight.

- This scheme poses problems for voice traffic that is largely intolerant of delay.

With LLQ, delay-sensitive data is sent first, before packets in other queues are treated.

LLQ allows delay-sensitive data such as voice to be sent first giving it preferential treatment.

6.2 – QoS Mechanisms

6.2.1 – QoS Models

6.2.1.1 – Video Tutorial – QoS Models

Because packets are delivered on a best-effort basis, the best effort model is not really an implementation of QoS.

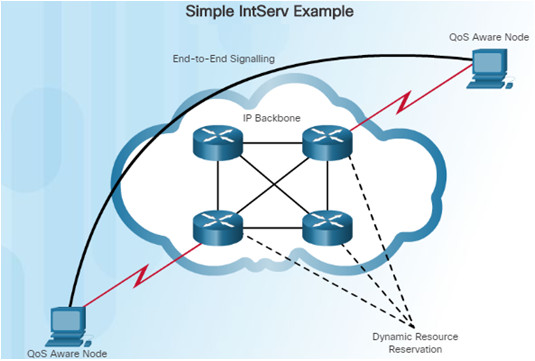

The integrated services model, or IntServ model, provides a very high degree of QoS to IP packets with guaranteed delivery.

It uses a signaling process known as RSVP, or resource reservation protocol.

The differentiated services model, or DiffServ model, is a highly scalable and flexible implementation of QoS. It works off manually configured traffic classes which need to be configured on routers throughout the network.

6.2.1.2 – Selecting an Appropriate QoS Policy Model

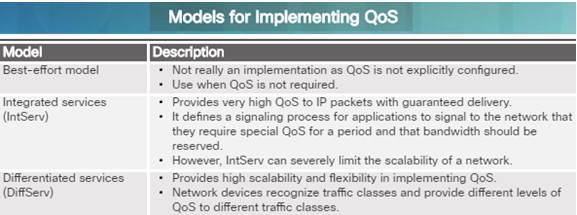

How can QoS be implemented in a network? The three models for implementing QoS are these:

- Best-effort model

- Integrated services (IntServ)

- Differentiated Services (DiffServ)

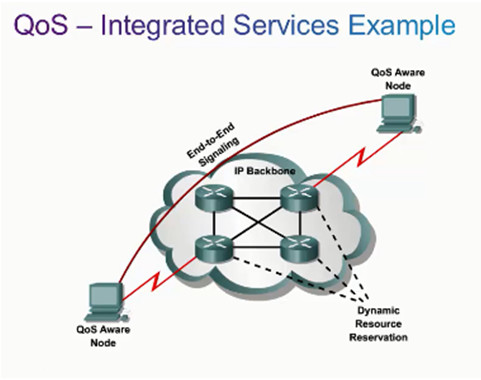

The table in the figure to the left summarizes these three models.

QoS is implemented in a network using either or both of these:

- IntServ – provides the highest guarantee of QoS, but is resource-intensive

- DiffServ – less resource intensive and more scalable

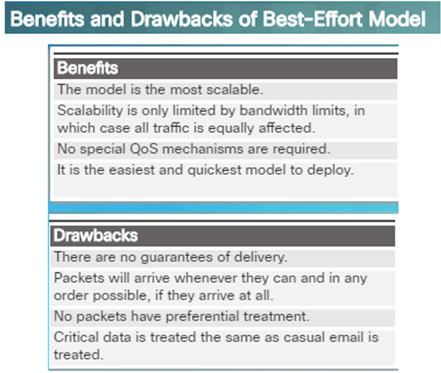

6.2.1.3 – Best-Effort

The basic design of the Internet, which is still applicable today, provides for best-effort packet delivery and provides no guarantees.

The best-effort model treats all network packets the same way.

Without QoS, the network cannot tell the difference between packets. A voice call will be treated the same as an email with a digital photograph attached.

The best effort-model is similar in concept to sending a letter using standard postal mail. All letters are treated the same and in some cases will never arrive.

6.2.1.4 – Integrated Services

The needs of real-time applications, such as remote video, multimedia conferencing, visualization, and virtual reality, motivated the development of the IntServ architecture model in 1994.

IntServ provides a way to deliver end-to-end Qos that real-time applications require by explicitly managing network resources to provide QoS to specific user packet streams.

It uses resource reservation and an admission-control mechanism as building blocks to establish and maintain QoS.

IntServ uses a connection-oriented approach inherited from telephony network design.

In the IntServ model, the application requests a specific kind of service from the network before sending the data.

The application informs the network of its traffic profile and requests a particular kind of service that can encompass its bandwidth and delay requirements.

IntServ uses the Resource Reservation Protocol (RSVP) to signal the QoS needs of an application’s traffic along devices in the end-to-end path through the network.

If the network devices along the path can reserve the necessary bandwidth, the originating application can begin transmitting – otherwise, no data is sent.

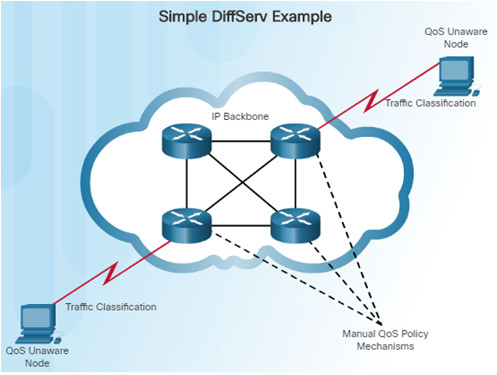

6.2.1.5 – Differentiated Services

The differentiated services (DiffServ) QoS model:

- Specifies a simple and scalable mechanism for classifying and managing network traffic.

- Provides QoS guarantees on modern IP networks.

- DiffServ can provide low-latency guaranteed service to critical network traffic such as voice or video.

The DiffServ design overcomes the limitations of both the best-effort and IntServ models.

DiffServ can provide an “almost guaranteed” QoS while still being cost-effective and scalable.

DiffServ is not an end-to-end QoS strategy because it cannot enforce end-to-end guarantees. However, it is a more scalable approach to implementing QoS.

In the figure to the left, a host forwards traffic to a router, the router classifies the flows into aggregates (classes) and provides the appropriate QoS policy for the classes.

DiffServ enforces and applies QoS mechanisms on a hop-by-hop basis uniformly applying global meaning to each traffic class to provide both flexibility and scalability.

DiffServ divides network traffic into classes based on business requirements. Each class can then be assigned a different level of service.

6.2.2 – QoS Implementation Techniques

6.2.2.1 – Video Tutorial – QoS Implementation Techniques

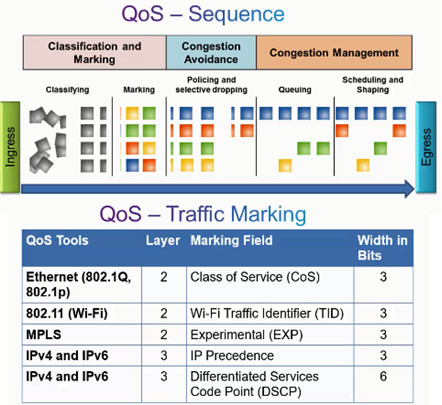

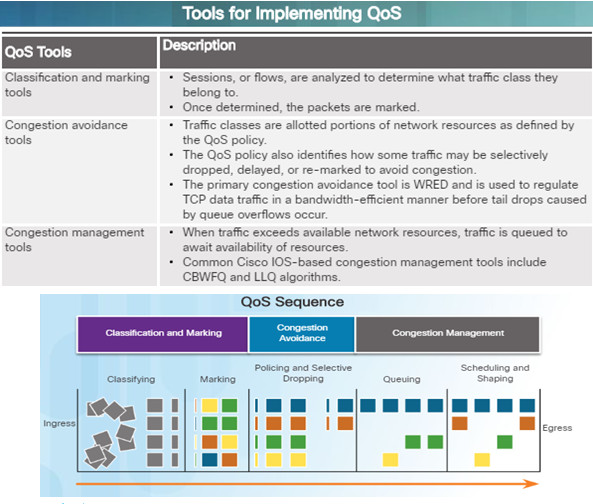

QoS implementation tools can be categorized into three main categories:

- Classification and marking tools – Session traffic is classified into different priority groupings and packets are marked.

- Congestion avoidance tools – Traffic classes are allotted network resources and some traffic may be selectively dropped, delayed or remarked to avoid congestion.

- Congestion management tools – During congestion, traffic is queued to await the availability of those resources; tools include class based weighted fair queuing, and low latency queuing.

6.2.2.2 – Avoiding Packet Loss

Packet loss is usually the result of congestion on an interface.

Most TCP applications experience slowdown because TCP automatically adjusts to network congestion.

- Some applications do not use TCP and cannot handle drops (fragile flows).

The following approaches can prevent drops in sensitive applications:

- Increase link capacity to ease or prevent congestion.

- Guarantee enough bandwidth and increase buffer space to accommodate bursts of traffic from fragile flows – WFQ, CBWFQ and LLQ.

- Prevent congestion by dropping lower-priority packets before congestion occurs – weighted random early detection (WRED).

6.2.2.3 – QoS Tools

There are three categories of QoS tools:

- Classification and marking tools

- Congestion avoidance tools

- Congestion management tools

Ingress packets (gray squares) are classified and their respective IP header is marked (colored squares). To avoid congestion, packets are then allocated resources based on defined policies.

Packets are then queued and forwarded out the egress interface based on their defined QoS shaping and policing policy.

Classification and marking can be done on ingress or egress, whereas other QoS actions such as queuing and shaping are usually done on egress.

6.2.2.4 – Avoiding Packet Loss

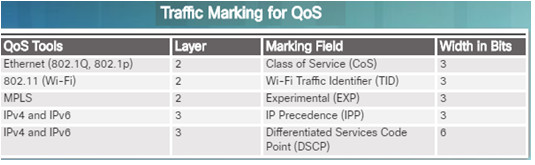

The table in the figure describes some of the marking fields used in various technologies. Consider the following points when deciding to mark traffic at Layers 2 or 3:

- Layer 2 marking of frames can be performed for non-IP traffic.

- Layer 2 marking of frames is the only QoS option available for switches that are not “IP aware”.

- Layer 3 marking will carry the QoS information end-to-end.

A packet has to be classified before it can have a QoS policy applied to it.

Classification and marking allows us to identify, or “mark” types of packets.

Classification determines the class of traffic to which packets or frames belong. Policies can not be applied unless the traffic is marked.

Methods of classifying traffic flows at Layer 2 and 3 include using interfaces, ACLs, and class maps.

Marking requires the addition of a value to the packet header and devices that receive the packet look at this field to see if it matches a defined policy.

Marking should be done as close to the source as possible and this establishes the trust boundary.

6.2.2.5 – Marking at Layer 2

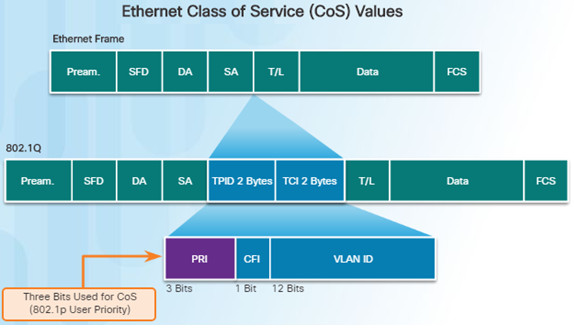

802.1Q is the IEEE standard that supports VLAN tagging at Layer 2 on Ethernet networks.

When 802.1Q is implemented, two fields are added to the Ethernet Frame and are inserted following the source MAC address field as shown in the figure to the left.

The 802.1Q standard includes the QoS prioritization scheme known as IEEE 802.1p. The standard uses the first three bits in the Tag Control Information (TCI) field and identifies the CoS markings.

These three bits allow eight levels of priority (0-7).

6.2.2.6 – Marking at Layer 3

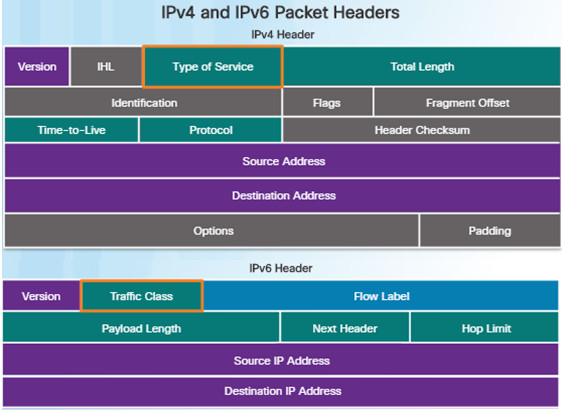

IPv4 and IPv6 specify an 8-bit field in their packet headers to mark packets.

- IPv4 – Type of Service (ToS) field

- IPv6 – Traffic Class field

These fields are used to carry the packet marking assigned by the QoS classification tools. Forwarding devices refer to this field and forward the packets based on the QoS policy.

RFC 2474 redefines the ToS field by renaming and extending the IPP field. The new filed has 6-bits allocated for QoS called the differentiated services code point (DSCP) field.

These six bits offer a maximum of 64 possible classes of service.

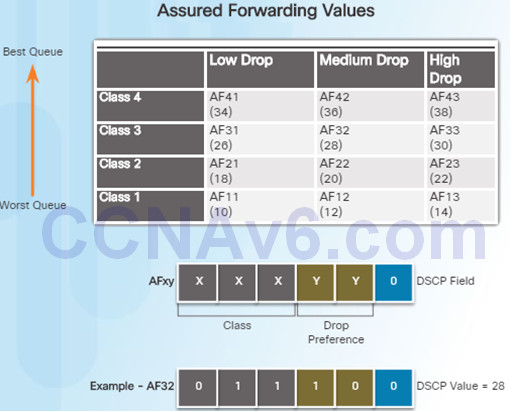

The 64 DSCP values are organized into three categories:

- Best-Effort (BE) – Default for all IP packets. The DSCP value is 0.

- Expedited Forwarding (EF) – The DSCP value is 46. At layer 3, Cisco recommends that EF should only be used to mark voice packets.

- Assured Forwarding (AF) – Uses the 5 most significant DSCP bits to indicate queues and drop preference. As shown in the figure, the first 3 most significant bits are used to designate the class.

- Class 4 is the best queue and Class 1 is the worst queue.

- The 4th and 5th most significant bits are used to designate the drop preference.

- The 6th most significant bit is set to zero.

- The AFxy formula shows how the AF values are calculated.

6.2.2.7 – Trust Boundaries

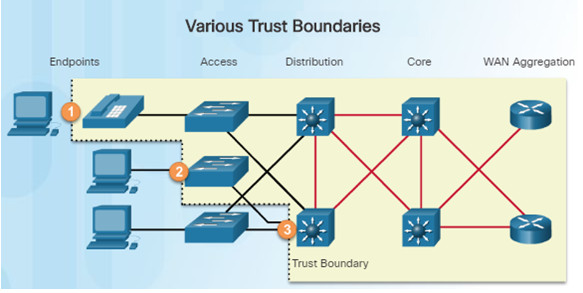

Where should markings occur?

Traffic should be classified and marked as close to its source as possible.

This defines the trust boundary as shown in the figure.

- Trusted endpoints have the capabilities and intelligence to mark application traffic to the appropriate Layer 2 CoS or Layer 3 DSCP values. Examples of trust endpoints include IP phones, wireless access points, and videoconferencing systems.

- Secure endpoints can have traffic marked at the Layer 2 switch.

- Traffic can also be marked at Layer 3 switches and routers.

Re-marking of traffic is typically necessary.

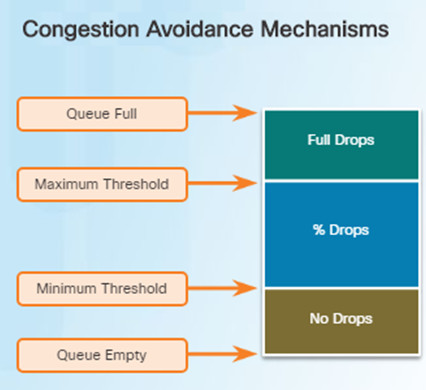

6.2.2.8 – Congestion Avoidance

Congestion avoidance tools monitor network traffic loads in an effort to anticipate and avoid congestion at common network bottlenecks before congestion becomes a problem.

Congestion avoidance is achieved through packet dropping.

These tools monitor the average depth of the queue.

- For example, when the queue fills up to the maximum threshold, a small percentage of packets are dropped.

- When the maximum threshold is passed, all packets are dropped.

The Cisco IOS includes weighted random early detection (WRED) as a possible congestion avoidance solution.

- WRED is a congestion avoidance technique that allows for preferential treatment of which packets will get dropped.

- The WRED algorithm allows for congestion avoidance on network interfaces by providing buffer management and allowing TCP traffic to decrease, or throttle back, before buffers are exhausted.

- Using WRED helps avoid tail drops and maximizes network use and TCP-application performance.

There is no congestion avoidance for UDP traffic – such as voice traffic.

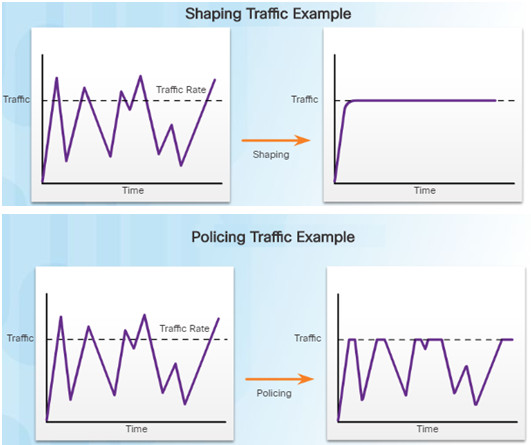

6.2.2.9 – Shaping and Policing

Traffic shaping and policing are two mechanisms provided by the Cisco IOS QoS software to prevent congestion.

Traffic shaping retains excess packets in a queue and then schedules the excess for later transmission over increments of time.

- The result of traffic shaping is a smoothed packet output rate as shown in the figure.

- Shaping requires sufficient memory.

Shaping is used on outbound traffic.

Policing is commonly implemented by service providers to enforce a contracted customer information rate (CIR).

Policing either drops or remarks excess traffic.

Policing is often applied to inbound traffic.

6.3 – Summary

6.3.1 – Conclusion

6.3.1.1 – Chapter 6: Quality of Service

- Explain the purpose and characteristics of QoS.

- Explain how networking devices implement QoS.

New Terms and Commands

| •delay

•packet loss •playout delay buffer •digital signal processor (DSP) •Cisco Visual Networking Index (VNI) •first-in, first-out (FIFO) •weighted fair queuing (WFQ) •class-based weighted fair queuing (CBWFQ) •low latency queuing (LLQ) •type of service (ToS) •best-effort model •Integrated Services (IntServ) •differentiated services (DiffServ) |

•Resource Reservation Protocol (RSVP)

•weighted random early detection (WRED) •congestion avoidance •Network Based Application Recognition (NBAR) •class of service (CoS) •differentiated services code point (DSCP) •IEEE 802.1p •Tag Control Information (TCI) field •Priority (PRI) field •type of service (ToS) field •Traffic Class field •IP Precedence (IPP) field |

| •best-effort (BE)

•Expedited Forwarding (EF) •Assured Forwarding (AF) •traffic shaping •traffic policing |

this is Chapter 7 but your file connect to Chapter 6…

fixed, thanks you!